Manual infrastructure management was slowing down deployments and creating inconsistencies between environments. I built a complete Infrastructure as Code system with Terraform that reduced deployment time from hours to minutes while improving reliability and security.

The Manual Deployment Problem

Deployments were painful. An engineer would spend 2-3 hours clicking through the AWS console, creating VPCs, configuring security groups, launching EC2 instances, setting up RDS databases. Then they'd repeat the process for staging, testing, and production.

Mistakes were common. A misconfigured security group in production. Forgotten IAM permissions. Inconsistent instance sizes between environments. Each error meant troubleshooting, fixing, and hoping nothing broke.

When we needed to scale from 2 environments to 5, the team balked. Nobody wanted to spend another 15 hours clicking through AWS consoles. We needed automation.

The AWS console maze that consumed hours of manual configuration

Infrastructure as Code

I chose Terraform for three reasons: declarative syntax, state management, and ecosystem.

Declarative meant describing what infrastructure we wanted, not how to create it. Terraform figured out the steps. This made code reviewable—infrastructure changes were just pull requests.

State management was crucial. Terraform tracks what exists in AWS versus what's defined in code. It calculates the minimal changes needed, avoiding unnecessary resource recreation.

The ecosystem was mature. Modules for every AWS service, extensive documentation, and a large community meant I wasn't starting from scratch.

Building Reusable Modules

I structured the Terraform code as reusable modules. Each module encapsulated a logical component: VPC networking, EC2 compute, RDS databases, S3 storage, Auto Scaling groups.

Modules had inputs (variables) and outputs. Want a VPC? Call the VPC module with your CIDR blocks and subnet configuration. It returns VPC ID, subnet IDs, and route table IDs. Other modules consume these outputs.

This composition pattern meant creating a new environment was just a few lines of code. Define the modules you need, pass in environment-specific variables, and Terraform handles the rest.

Terraform module architecture with composition and reuse

Security by Default

Security couldn't be an afterthought. I built it into every module from the start.

IAM roles with minimal permissions. Each service got only the permissions it needed. EC2 instances couldn't access S3 buckets they didn't own. RDS databases were isolated in private subnets.

Encryption everywhere. S3 buckets used KMS encryption. RDS databases encrypted data at rest. EBS volumes attached to EC2 instances were encrypted by default.

Secrets management via AWS Secrets Manager. No hardcoded credentials in code or environment variables. Applications fetched credentials at runtime with automatic rotation.

Layered security: IAM roles, KMS encryption, and Secrets Manager

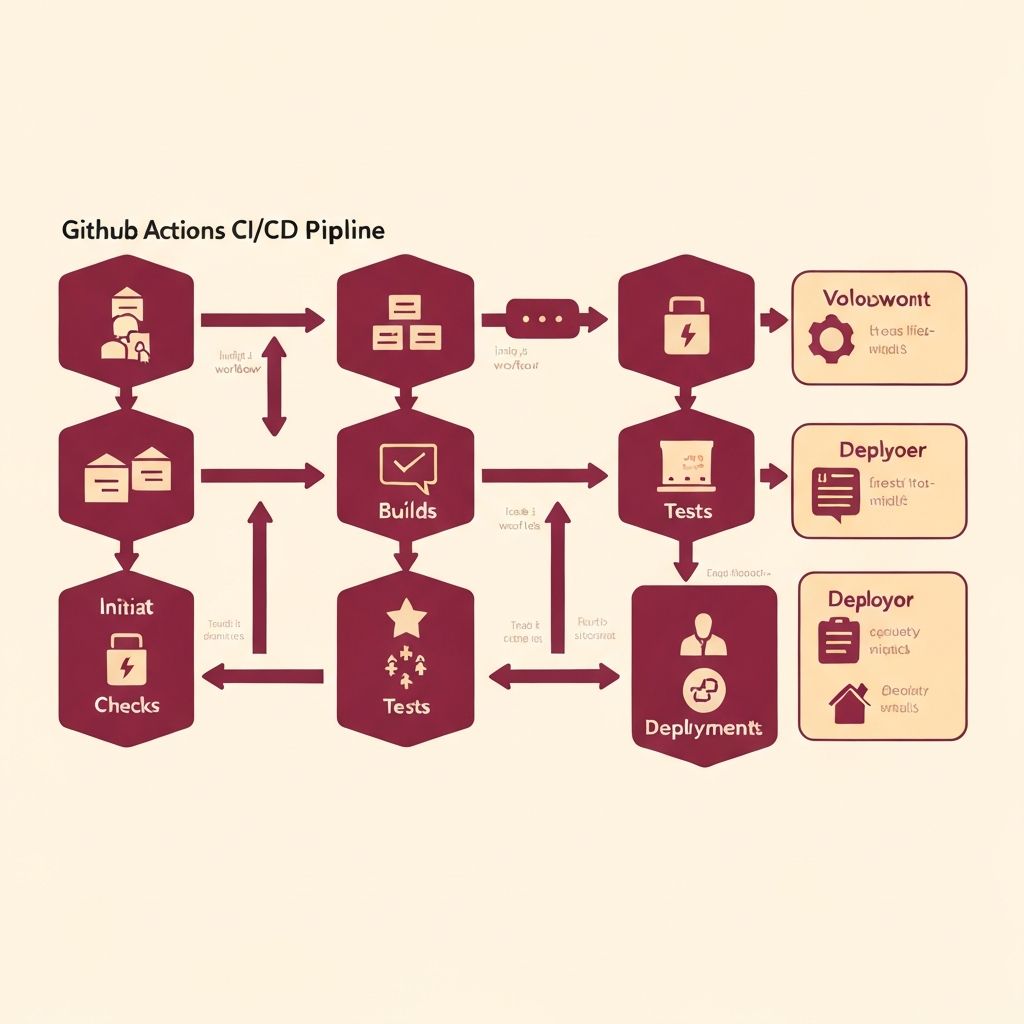

Automated CI/CD Pipeline

Manual terraform apply commands weren't much better than clicking in the console. I built a GitHub Actions workflow that automated everything.

On pull requests: terraform plan runs, showing exactly what would change. Reviewers see the infrastructure diff alongside code changes.

On merge to main: terraform apply runs automatically for staging. Changes deploy within minutes.

For production: a manual approval step. A senior engineer reviews, approves, and production updates.

The pipeline also validated code with terraform validate and terraform fmt, catching syntax errors before deployment.

Automated CI/CD pipeline from code commit to production deployment

Monitoring and Observability

Infrastructure isn't useful if you don't know when it's failing. I extended the Terraform setup to include comprehensive CloudWatch monitoring.

Custom dashboards for each service. EC2 CPU and memory utilization. RDS connection counts and query performance. Auto Scaling group health and desired capacity.

Alarms for critical issues. If an EC2 instance becomes unhealthy, trigger Auto Scaling replacement. If RDS connections saturate, alert on-call engineers.

Load Balancers with health checks. Unhealthy instances automatically removed from rotation. Traffic flows only to healthy instances.

This went beyond the course requirements, but observability is what makes infrastructure reliable in production.

CloudWatch dashboard providing real-time infrastructure visibility

Auto Scaling for Resilience

Traffic isn't constant. We needed infrastructure that scaled with demand.

I configured Auto Scaling groups with policies based on CloudWatch metrics. When CPU utilization exceeded 70% for 5 minutes, scale up. When it dropped below 30% for 10 minutes, scale down.

This required careful tuning. Scale up too aggressively, and you waste money. Scale down too aggressively, and you can't handle traffic spikes.

I also added Route 53 health checks and failover. If an entire availability zone goes down, Route 53 automatically routes traffic to healthy zones. Users never notice the outage.

Auto Scaling responding to traffic patterns with automatic capacity adjustment

The Results

The impact was immediate. Creating a new environment dropped from 3 hours to 10 minutes. Consistency improved—every environment used identical infrastructure, just with different scale.

The team's velocity increased. Engineers self-served infrastructure by opening pull requests. No more waiting for DevOps to manually provision resources.

Security posture improved. Every deployment included encryption, minimal IAM permissions, and secrets management. Security reviews happened in pull requests, not after deployment.

Most importantly, reliability improved. Auto Scaling handled traffic spikes automatically. CloudWatch alerts caught issues before users noticed. And infrastructure failures self-healed through automatic replacement.

Before and after: manual 3-hour deployments vs automated 10-minute deployments

What I Learned

This project taught me that infrastructure and application code deserve the same rigor. Version control, code review, automated testing, CI/CD—these practices aren't just for application development.

I learned that security is easier to build in from the start than to retrofit later. Starting with encrypted storage, minimal IAM roles, and secrets management as defaults meant every environment was secure by default.

I also learned the value of observability. Infrastructure that "works" isn't enough. You need to know how it's performing, where bottlenecks are, and when failures occur. CloudWatch dashboards and alarms transformed infrastructure from opaque to transparent.

The system now powers multiple production environments, handling millions of requests daily across multiple AWS regions. And when someone asks for a new environment, the answer is "pull request, please."

Let's Connect

Contact Information

Email:buildwithari.dev@gmail.com

Location:Boston, MA